一文教你Python如何快速精准抓取网页数据

本文将使用requests和beautifulsoup这两个流行的库来实现。

1. 准备工作

首先安装必要的库:

pip install requests beautifulsoup4

2. 基础爬虫实现

import requests

from bs4 import beautifulsoup

import time

import random

def get_csdn_articles(keyword, pages=1):

"""

抓取csdn上指定关键词的文章

:param keyword: 搜索关键词

:param pages: 要抓取的页数

:return: 文章列表,包含标题、链接、简介等信息

"""

headers = {

'user-agent': 'mozilla/5.0 (windows nt 10.0; win64; x64) applewebkit/537.36 (khtml, like gecko) chrome/91.0.4472.124 safari/537.36'

}

base_url = "https://so.csdn.net/so/search"

articles = []

for page in range(1, pages + 1):

params = {

'q': keyword,

't': 'blog',

'p': page

}

try:

response = requests.get(base_url, headers=headers, params=params)

response.raise_for_status()

soup = beautifulsoup(response.text, 'html.parser')

items = soup.find_all('div', class_='search-item')

for item in items:

title_tag = item.find('a', class_='title')

if not title_tag:

continue

title = title_tag.get_text().strip()

link = title_tag['href']

# 获取简介

desc_tag = item.find('p', class_='content')

description = desc_tag.get_text().strip() if desc_tag else '无简介'

# 获取阅读数和发布时间

info_tags = item.find_all('span', class_='date')

read_count = info_tags[0].get_text().strip() if len(info_tags) > 0 else '未知'

publish_time = info_tags[1].get_text().strip() if len(info_tags) > 1 else '未知'

articles.append({

'title': title,

'link': link,

'description': description,

'read_count': read_count,

'publish_time': publish_time

})

print(f"已抓取第 {page} 页,共 {len(items)} 篇文章")

# 随机延迟,避免被封

time.sleep(random.uniform(1, 3))

except exception as e:

print(f"抓取第 {page} 页时出错: {e}")

continue

return articles

if __name__ == '__main__':

# 示例:抓取关于"python爬虫"的前3页文章

keyword = "

3. 高级功能扩展

3.1 抓取文章详情

def get_article_detail(url):

"""抓取文章详情内容"""

headers = {

'user-agent': 'mozilla/5.0 (windows nt 10.0; win64; x64) applewebkit/537.36 (khtml, like gecko) chrome/91.0.4472.124 safari/537.36'

}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

soup = beautifulsoup(response.text, 'html.parser')

# 获取文章主体内容

content = soup.find('article')

if content:

# 清理不必要的标签

for tag in content(['script', 'style', 'iframe', 'nav', 'footer']):

tag.decompose()

return content.get_text().strip()

return "无法获取文章内容"

except exception as e:

print(f"抓取文章详情出错: {e}")

return none

3.2 保存数据到文件

import json

import csv

def save_to_json(data, filename):

"""保存数据到json文件"""

with open(filename, 'w', encoding='utf-8') as f:

json.dump(data, f, ensure_ascii=false, indent=2)

def save_to_csv(data, filename):

"""保存数据到csv文件"""

if not data:

return

keys = data[0].keys()

with open(filename, 'w', newline='', encoding='utf-8') as f:

writer = csv.dictwriter(f, fieldnames=keys)

writer.writeheader()

writer.writerows(data)

4. 完整示例

if __name__ == '__main__':

# 抓取文章列表

keyword = "python爬虫"

articles = get_csdn_articles(keyword, pages=2)

# 抓取前3篇文章的详情

for article in articles[:3]:

article['content'] = get_article_detail(article['link'])

time.sleep(random.uniform(1, 2)) # 延迟

# 保存数据

save_to_json(articles, 'csdn_articles.json')

save_to_csv(articles, 'csdn_articles.csv')

print("数据抓取完成并已保存!")

5. 反爬虫策略应对

1.设置请求头:模拟浏览器访问

2.随机延迟:避免请求过于频繁

3.使用代理ip:防止ip被封

4.处理验证码:可能需要人工干预

5.遵守robots.txt:尊重网站的爬虫规则

到此这篇关于一文教你python如何快速精准抓取网页数据的文章就介绍到这了,更多相关python抓取网页数据内容请搜索代码网以前的文章或继续浏览下面的相关文章希望大家以后多多支持代码网!

推荐阅读

-

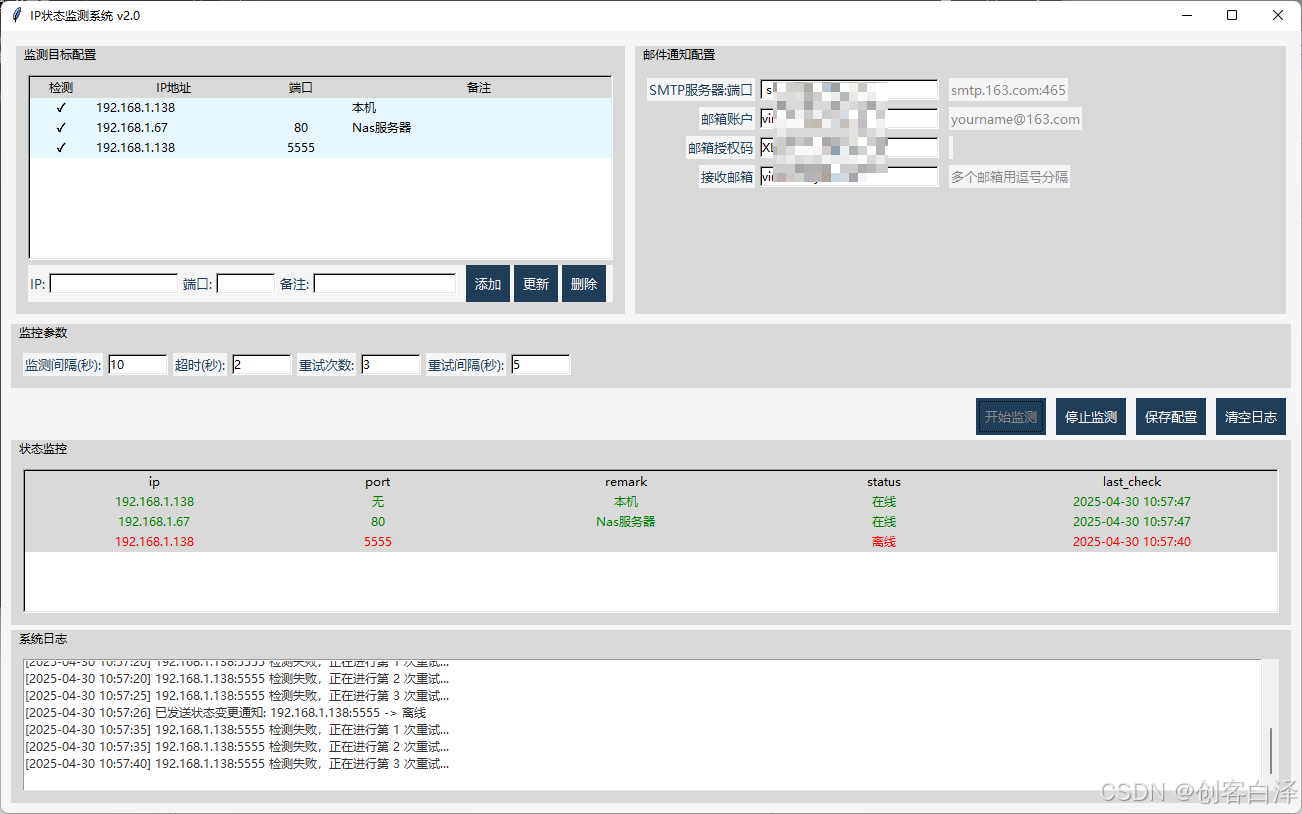

使用Python实现IP地址和端口状态检测与监控

-

基于Python打造一个智能单词管理神器

-

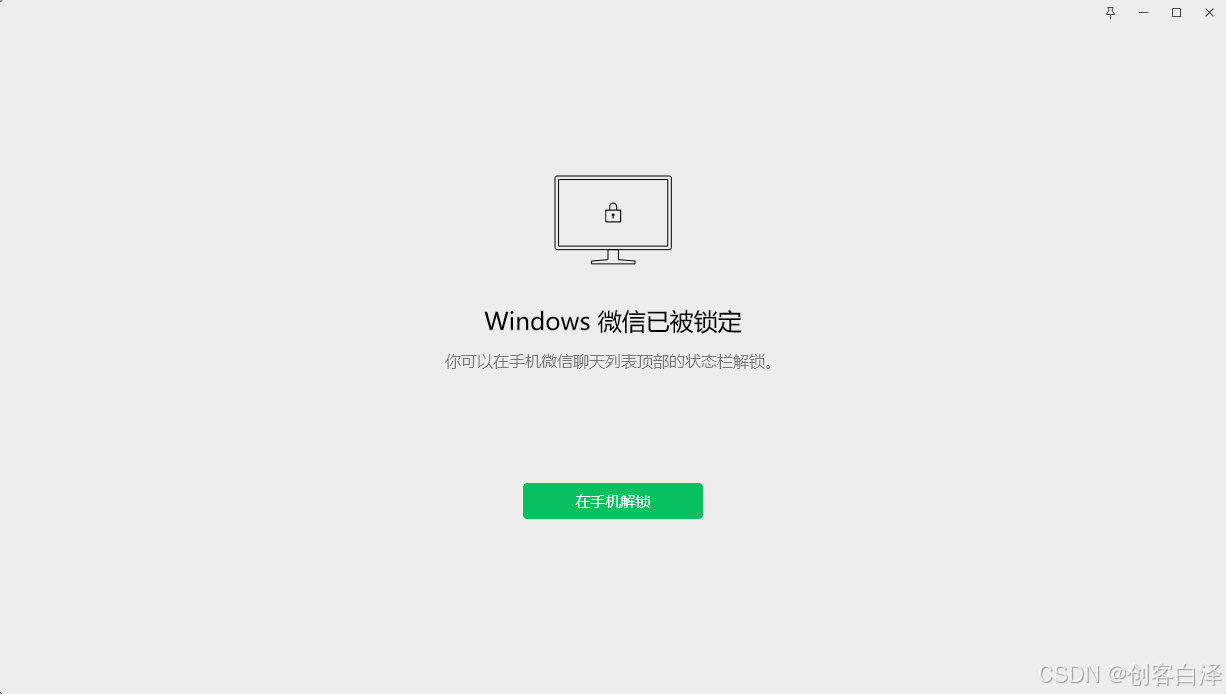

Python实现微信自动锁定工具

-

使用Python创建一个功能完整的Windows风格计算器程序

python实现windows系统计算器程序(含高级功能)下面我将介绍如何使用python创建一个功能完整的windows风格计...

-

Python开发文字版随机事件游戏的项目实例

随机事件游戏是一种通过生成不可预测的事件来增强游戏体验的类型。在这类游戏中,玩家必须应对随机发生的情况,这些情况可能会影响他们的资...

-

使用Pandas实现Excel中的数据透视表的项目实践

引言在数据分析中,数据透视表是一种非常强大的工具,它可以帮助我们快速汇总、分析和可视化大量数据。虽然excel提供了内置的数据透...

-

Pandas利用主表更新子表指定列小技巧

一、前言工作的小技巧,利用pandas读取主表和子表,利用主表的指定列,更新子表的指定列。案例:主表:uidname0...

-

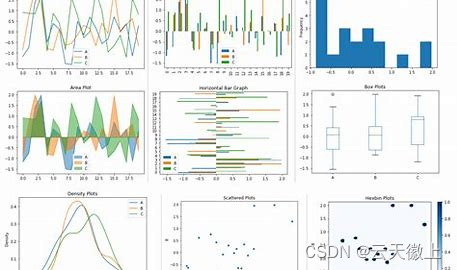

Pandas中统计汇总可视化函数plot()的使用

-

Python中tensorflow的argmax()函数的使用小结

在tensorflow中,argmax()函数是一个非常重要的操作,它用于返回给定张量(tensor)沿指定轴的最大值的索引。这个...

-

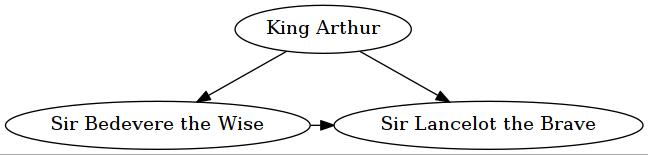

Python中模块graphviz使用入门