怎么在Python中利用sklearn库实现一个分类算法

怎么在Python中利用sklearn库实现一个分类算法?很多新手对此不是很清楚,为了帮助大家解决这个难题,下面小编将为大家详细讲解,有这方面需求的人可以来学习下,希望你能有所收获。

scikit-learn已经包含在Anaconda中。也可以在官方下载源码包进行安装。本文代码里封装了如下机器学习算法,我们修改数据加载函数,即可一键测试:

#coding=gbk

'''

Createdon2016年6月4日

@author:bryan

'''

importtime

fromsklearnimportmetrics

importpickleaspickle

importpandasaspd

#MultinomialNaiveBayesClassifier

defnaive_bayes_classifier(train_x,train_y):

fromsklearn.naive_bayesimportMultinomialNB

model=MultinomialNB(alpha=0.01)

model.fit(train_x,train_y)

returnmodel

#KNNClassifier

defknn_classifier(train_x,train_y):

fromsklearn.neighborsimportKNeighborsClassifier

model=KNeighborsClassifier()

model.fit(train_x,train_y)

returnmodel

#LogisticRegressionClassifier

deflogistic_regression_classifier(train_x,train_y):

fromsklearn.linear_modelimportLogisticRegression

model=LogisticRegression(penalty='l2')

model.fit(train_x,train_y)

returnmodel

#RandomForestClassifier

defrandom_forest_classifier(train_x,train_y):

fromsklearn.ensembleimportRandomForestClassifier

model=RandomForestClassifier(n_estimators=8)

model.fit(train_x,train_y)

returnmodel

#DecisionTreeClassifier

defdecision_tree_classifier(train_x,train_y):

fromsklearnimporttree

model=tree.DecisionTreeClassifier()

model.fit(train_x,train_y)

returnmodel

#GBDT(GradientBoostingDecisionTree)Classifier

defgradient_boosting_classifier(train_x,train_y):

fromsklearn.ensembleimportGradientBoostingClassifier

model=GradientBoostingClassifier(n_estimators=200)

model.fit(train_x,train_y)

returnmodel

#SVMClassifier

defsvm_classifier(train_x,train_y):

fromsklearn.svmimportSVC

model=SVC(kernel='rbf',probability=True)

model.fit(train_x,train_y)

returnmodel

#SVMClassifierusingcrossvalidation

defsvm_cross_validation(train_x,train_y):

fromsklearn.grid_searchimportGridSearchCV

fromsklearn.svmimportSVC

model=SVC(kernel='rbf',probability=True)

param_grid={'C':[1e-3,1e-2,1e-1,1,10,100,1000],'gamma':[0.001,0.0001]}

grid_search=GridSearchCV(model,param_grid,n_jobs=1,verbose=1)

grid_search.fit(train_x,train_y)

best_parameters=grid_search.best_estimator_.get_params()

forpara,valinlist(best_parameters.items()):

print(para,val)

model=SVC(kernel='rbf',C=best_parameters['C'],gamma=best_parameters['gamma'],probability=True)

model.fit(train_x,train_y)

returnmodel

defread_data(data_file):

data=pd.read_csv(data_file)

train=data[:int(len(data)*0.9)]

test=data[int(len(data)*0.9):]

train_y=train.label

train_x=train.drop('label',axis=1)

test_y=test.label

test_x=test.drop('label',axis=1)

returntrain_x,train_y,test_x,test_y

if__name__=='__main__':

data_file="H:\\Research\\data\\trainCG.csv"

thresh=0.5

model_save_file=None

model_save={}

test_classifiers=['NB','KNN','LR','RF','DT','SVM','SVMCV','GBDT']

classifiers={'NB':naive_bayes_classifier,

'KNN':knn_classifier,

'LR':logistic_regression_classifier,

'RF':random_forest_classifier,

'DT':decision_tree_classifier,

'SVM':svm_classifier,

'SVMCV':svm_cross_validation,

'GBDT':gradient_boosting_classifier

}

print('readingtrainingandtestingdata...')

train_x,train_y,test_x,test_y=read_data(data_file)

forclassifierintest_classifiers:

print('*******************%s********************'%classifier)

start_time=time.time()

model=classifiers[classifier](train_x,train_y)

print('trainingtook%fs!'%(time.time()-start_time))

predict=model.predict(test_x)

ifmodel_save_file!=None:

model_save[classifier]=model

precision=metrics.precision_score(test_y,predict)

recall=metrics.recall_score(test_y,predict)

print('precision:%.2f%%,recall:%.2f%%'%(100*precision,100*recall))

accuracy=metrics.accuracy_score(test_y,predict)

print('accuracy:%.2f%%'%(100*accuracy))

ifmodel_save_file!=None:

pickle.dump(model_save,open(model_save_file,'wb'))测试结果如下:

reading training and testing data...******************* NB ********************training took 0.004986s!precision: 78.08%, recall: 71.25%accuracy: 74.17%******************* KNN ********************training took 0.017545s!precision: 97.56%, recall: 100.00%accuracy: 98.68%******************* LR ********************training took 0.061161s!precision: 89.16%, recall: 92.50%accuracy: 90.07%******************* RF ********************training took 0.040111s!precision: 96.39%, recall: 100.00%accuracy: 98.01%******************* DT ********************training took 0.004513s!precision: 96.20%, recall: 95.00%accuracy: 95.36%******************* SVM ********************training took 0.242145s!precision: 97.53%, recall: 98.75%accuracy: 98.01%******************* SVMCV ********************Fitting 3 folds for each of 14 candidates, totalling 42 fits[Parallel(n_jobs=1)]: Done 42 out of 42 | elapsed: 6.8s finishedprobability Trueverbose Falsecoef0 0.0degree 3tol 0.001shrinking Truecache_size 200gamma 0.001max_iter -1C 1000decision_function_shape Nonerandom_state Noneclass_weight Nonekernel rbftraining took 7.434668s!precision: 98.75%, recall: 98.75%accuracy: 98.68%******************* GBDT ********************training took 0.521916s!precision: 97.56%, recall: 100.00%accuracy: 98.68%

看完上述内容是否对您有帮助呢?如果还想对相关知识有进一步的了解或阅读更多相关文章,请关注恰卡编程网行业资讯频道,感谢您对恰卡编程网的支持。

推荐阅读

-

Python中怎么动态声明变量赋值

这篇文章将为大家详细讲解有关Python中怎么动态声明变量赋值,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读完这篇文...

-

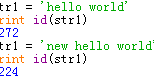

python中变量的存储原理是什么

-

Python中怎么引用传递变量赋值

这篇文章将为大家详细讲解有关Python中怎么引用传递变量赋值,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读完这篇文...

-

python中怎么获取程序执行文件路径

python中怎么获取程序执行文件路径,很多新手对此不是很清楚,为了帮助大家解决这个难题,下面小编将为大家详细讲解,有这方面需求的...

-

Python中如何获取文件系统的使用率

Python中如何获取文件系统的使用率,针对这个问题,这篇文章详细介绍了相对应的分析和解答,希望可以帮助更多想解决这个问题的小伙伴...

-

Python中怎么获取文件的创建和修改时间

这篇文章将为大家详细讲解有关Python中怎么获取文件的创建和修改时间,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读...

-

python中怎么获取依赖包

今天就跟大家聊聊有关python中怎么获取依赖包,可能很多人都不太了解,为了让大家更加了解,小编给大家总结了以下内容,希望大家根据...

-

python怎么实现批量文件加密功能

-

python中怎么实现threading线程同步

小编给大家分享一下python中怎么实现threading线程同步,希望大家阅读完这篇文章之后都有所收获,下面让我们一起去探讨吧!...

-

python下thread模块创建线程的方法

本篇内容介绍了“python下thread模块创建线程的方法”的有关知识,在实际案例的操作过程中,不少人都会遇到这样的困境,接下来...