怎么用Web Scraping爬取HTML网页

怎么用Web Scraping爬取HTML网页

这篇文章主要讲解了“怎么用Web Scraping爬取HTML网页”,文中的讲解内容简单清晰,易于学习与理解,下面请大家跟着小编的思路慢慢深入,一起来研究和学习“怎么用Web Scraping爬取HTML网页”吧!

-爬取HTML网页

-直接下载数据文件,例如csv,txt,pdf文件

-通过应用程序编程接口(API)访问数据,例如 电影数据库,Twitter

选择网页爬取,当然了解HTML网页的基本结构,可以参考这个网页:

HTML的基本结构

HTML标记:head,body,p,a,form,table等等

标签会具有属性。例如,标记a具有属性(或属性)href的链接的目标。

class和id是html用来通过级联样式表(CSS)控制每个元素的样式的特殊属性。 id是元素的唯一标识符,而class用于将元素分组以进行样式设置。

一个元素可以与多个类相关联。 这些类别之间用空格隔开,例如 <h3 class=“ city main”>伦敦</ h3>

下图是来自W3SCHOOL的例子,city的包括三个属性,main包括一个属性,London运用了两个city和main,这两个类,呈现出来的是下图的样子。

可以通过标签相对于彼此的位置来引用标签

child-child是另一个标签内的标签,例如 这两个p标签是div标签的子标签。

parent-parent是一个标签,另一个标签在其中,例如 html标签是body标签的parent标签。

siblings-siblings是与另一个标签具有相同parent标签的标签,例如 在html示例中,head和body标签是同级标签,因为它们都在html内。 两个p标签都是sibling,因为它们都在body里面。

四步爬取网页:

第一步:安装模块

安装requests,beautifulsoup4,用来爬取网页信息

Install modules requests, BeautifulSoup4/scrapy/selenium/....requests: allow you to send HTTP/1.1 requests using Python. To install:Open terminal (Mac) or Anaconda Command Prompt (Windows)code: BeautifulSoup: web page parsing library, to install, use:

第二步 :利用安装包来读取网页源码

第三步:浏览网页源码找到需要读取信息的位置

这里不同的浏览器读取源码有差异,下面介绍几个,有相关网页查询详细信息。

Firefox: right click on the web page and select "view page source"Safari: please instruction here to see page source ()Ineternet Explorer: see instruction at

第四步:开始读取

Beautifulsoup: 简单那,支持CSS Selector, 但不支持 XPathscrapy (): 支持 CSS Selector 和XPathSelenium: 可以爬取动态网页 (例如下拉不断更新的)lxml等BeautifulSoup里Tag: an xml or HTML tag 标签Name: every tag has a name 每个标签的名字Attributes: a tag may have any number of attributes. 每个标签有一个到多个属性 A tag is shown as a dictionary in the form of {attribute1_name:attribute1_value, attribute2_name:attribute2_value, ...}. If an attribute has multiple values, the value is stored as a listNavigableString: the text within a tag

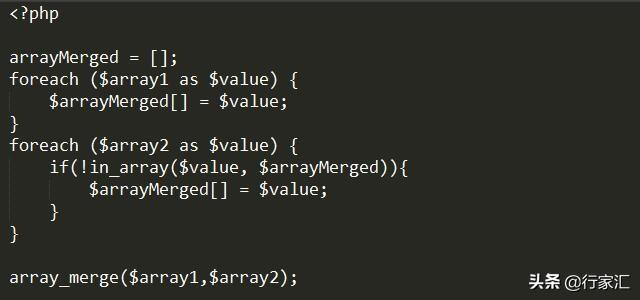

上代码:

#Import requests and beautifulsoup packages

from IPython.core.interactiveshell import InteractiveShell

InteractiveShell.ast_node_interactivity="all"

# import requests package

import requests

# import BeautifulSoup from package bs4 (i.e. beautifulsoup4)

from bs4 import BeautifulSoup

Get web page content

# send a get request to the web page

page=requests.get("A simple example page")

# status_code 200 indicates success.

# a status code >200 indicates a failure

if page.status_code==200:

# content property gives the content returned in bytes

print(page.content) # text in bytes

print(page.text) # text in unicode

#Parse web page content

# Process the returned content using beautifulsoup module

# initiate a beautifulsoup object using the html source and Python’s html.parser

soup=BeautifulSoup(page.content, 'html.parser')

# soup object stands for the **root**

# node of the html document tree

print("Soup object:")

# print soup object nicely

print(soup.prettify())

# soup.children returns an iterator of all children nodes

print("\soup children nodes:")

soup_children=soup.children

print(soup_children)

# convert to list

soup_children=list(soup.children)

print("\nlist of children of root:")

print(len(soup_children))

# html is the only child of the root node

html=soup_children[0]

html

# Get head and body tag

html_children=list(html.children)

print("how many children under html? ", len(html_children))

for idx, child in enumerate(html_children):

print("Child {} is: {}\n".format(idx, child))

# head is the second child of html

head=html_children[1]

# extract all text inside head

print("\nhead text:")

print(head.get_text())

# body is the fourth child of html

body=html_children[3]

# Get details of a tag

# get the first p tag in the div of body

div=list(body.children)[1]

p=list(div.children)[1]

p

# get the details of p tag

# first, get the data type of p

print("\ndata type:")

print(type(p))

# get tag name (property of p object)

print ("\ntag name: ")

print(p.name)

# a tag object with attributes has a dictionary

# use <tag>.attrs to get the dictionary

# each attribute name of the tag is a key

# get all attributes

p.attrs

# get "class" attribute

print ("\ntag class: ")

print(p["class"])

# how to determine if 'id' is an attribute of p?

# get text of p tag

p.get_text()

感谢各位的阅读,以上就是“怎么用Web Scraping爬取HTML网页”的内容了,经过本文的学习后,相信大家对怎么用Web Scraping爬取HTML网页这一问题有了更深刻的体会,具体使用情况还需要大家实践验证。这里是恰卡编程网,小编将为大家推送更多相关知识点的文章,欢迎关注!

推荐阅读

-

html简介(超文本标记语言)

背景知识HTML与W3C(WorldWideWeb:www)的关系,HTML规范是由w3c负责制定的,W...

-

css边框属性如何设置(html button如何设置圆角边框颜色)

htmlbutton如何设置圆角边框颜色?在HTML中把块的边框可以做成圆角需要利用css的border-radius属性。cs...

-

XSS注入我也不怕不怕啦–PHP从框架层面屏蔽XSS的思考和实践

本文由腾讯WeTest团队提供,更多资讯可直接戳链接查看:微信号:TencentWeTest对于新接触web开发的同学来说...

-

让你的nginx支持php

-

如何自学PHP,怎样才能不走弯路?

-

使用 curl 从命令行访问互联网

-

简单粗暴,SQL漏洞自动注入

-

PHP是世界上最好的编程语言

-

白帽黑客教你SQL注入攻击方式及如何防御SQL注入攻击

-

LNMP 性能优化之 PHP 性能优化