python如何爬取影视网站下载链接

这篇文章主要介绍python如何爬取影视网站下载链接,文中介绍的非常详细,具有一定的参考价值,感兴趣的小伙伴们一定要看完!

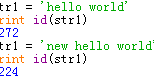

运行效果

导入模块

importrequests,re fromrequests.cookiesimportRequestsCookieJar fromfake_useragentimportUserAgent importos,pickle,threading,time importconcurrent.futures fromgotoimportwith_goto

爬虫主代码

defget_content_url_name(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

content=response.text

reg=re.compile(r'<ahref="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"class="thumbnail-img"title="(.*?)"')

url_name_list=reg.findall(content)

returnurl_name_list

defget_content(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

returnresponse.text

defsearch_durl(url):

content=get_content(url)

reg=re.compile(r"{'\\x64\\x65\\x63\\x72\\x69\\x70\\x74\\x50\\x61\\x72\\x61\\x6d':'(.*?)'}")

index=reg.findall(content)[0]

download_url=url[:-5]+r'/downloadList?decriptParam='+index

content=get_content(download_url)

reg1=re.compile(r'title=".*?"href="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"')

download_list=reg1.findall(content)

returndownload_list

defget_page(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

content=response.text

reg=re.compile(r'<atarget="_blank"class="title"href="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"title="(.*?)">(.*?)<\/a>')

url_name_list=reg.findall(content)

returnurl_name_list

@with_goto

defmain():

print("=========================================================")

name=input("请输入剧名(输入quit退出):")

ifname=="quit":

exit()

url="http://www.yikedy.co/search?query="+name

dlist=get_page(url)

print("\n")

if(dlist):

num=0

count=0

foriindlist:

if(nameini[1]):

print(f"{num}{i[1]}")

num+=1

elifnum==0andcount==len(dlist)-1:

goto.end

count+=1

dest=int(input("\n\n请输入剧的编号(输100跳过此次搜寻):"))

ifdest==100:

goto.end

x=0

print("\n以下为下载链接:\n")

foriindlist:

if(nameini[1]):

if(x==dest):

fordurlinsearch_durl(i[0]):

print(f"{durl}\n")

print("\n")

break

x+=1

else:

label.end

print("没找到或不想看\n")完整代码

importrequests,re

fromrequests.cookiesimportRequestsCookieJar

fromfake_useragentimportUserAgent

importos,pickle,threading,time

importconcurrent.futures

fromgotoimportwith_goto

defget_content_url_name(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

content=response.text

reg=re.compile(r'<ahref="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"class="thumbnail-img"title="(.*?)"')

url_name_list=reg.findall(content)

returnurl_name_list

defget_content(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

returnresponse.text

defsearch_durl(url):

content=get_content(url)

reg=re.compile(r"{'\\x64\\x65\\x63\\x72\\x69\\x70\\x74\\x50\\x61\\x72\\x61\\x6d':'(.*?)'}")

index=reg.findall(content)[0]

download_url=url[:-5]+r'/downloadList?decriptParam='+index

content=get_content(download_url)

reg1=re.compile(r'title=".*?"href="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"')

download_list=reg1.findall(content)

returndownload_list

defget_page(url):

send_headers={

"User-Agent":"Mozilla/5.0(X11;Linuxx86_64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/51.0.2704.103Safari/537.36",

"Connection":"keep-alive",

"Accept":"text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"Accept-Language":"zh-CN,zh;q=0.8"

}

cookie_jar=RequestsCookieJar()

cookie_jar.set("mttp","9740fe449238",domain="www.yikedy.co")

response=requests.get(url,send_headers,cookies=cookie_jar)

response.encoding='utf-8'

content=response.text

reg=re.compile(r'<atarget="_blank"class="title"href="(.*?)"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"rel="externalnofollow"title="(.*?)">(.*?)<\/a>')

url_name_list=reg.findall(content)

returnurl_name_list

@with_goto

defmain():

print("=========================================================")

name=input("请输入剧名(输入quit退出):")

ifname=="quit":

exit()

url="http://www.yikedy.co/search?query="+name

dlist=get_page(url)

print("\n")

if(dlist):

num=0

count=0

foriindlist:

if(nameini[1]):

print(f"{num}{i[1]}")

num+=1

elifnum==0andcount==len(dlist)-1:

goto.end

count+=1

dest=int(input("\n\n请输入剧的编号(输100跳过此次搜寻):"))

ifdest==100:

goto.end

x=0

print("\n以下为下载链接:\n")

foriindlist:

if(nameini[1]):

if(x==dest):

fordurlinsearch_durl(i[0]):

print(f"{durl}\n")

print("\n")

break

x+=1

else:

label.end

print("没找到或不想看\n")

print("本软件由CLY.所有\n\n")

while(True):

main()以上是“python如何爬取影视网站下载链接”这篇文章的所有内容,感谢各位的阅读!希望分享的内容对大家有帮助,更多相关知识,欢迎关注恰卡编程网行业资讯频道!

推荐阅读

-

Python中怎么动态声明变量赋值

这篇文章将为大家详细讲解有关Python中怎么动态声明变量赋值,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读完这篇文...

-

python中变量的存储原理是什么

-

Python中怎么引用传递变量赋值

这篇文章将为大家详细讲解有关Python中怎么引用传递变量赋值,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读完这篇文...

-

python中怎么获取程序执行文件路径

python中怎么获取程序执行文件路径,很多新手对此不是很清楚,为了帮助大家解决这个难题,下面小编将为大家详细讲解,有这方面需求的...

-

Python中如何获取文件系统的使用率

Python中如何获取文件系统的使用率,针对这个问题,这篇文章详细介绍了相对应的分析和解答,希望可以帮助更多想解决这个问题的小伙伴...

-

Python中怎么获取文件的创建和修改时间

这篇文章将为大家详细讲解有关Python中怎么获取文件的创建和修改时间,文章内容质量较高,因此小编分享给大家做个参考,希望大家阅读...

-

python中怎么获取依赖包

今天就跟大家聊聊有关python中怎么获取依赖包,可能很多人都不太了解,为了让大家更加了解,小编给大家总结了以下内容,希望大家根据...

-

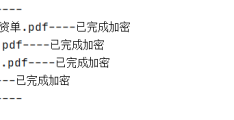

python怎么实现批量文件加密功能

-

python中怎么实现threading线程同步

小编给大家分享一下python中怎么实现threading线程同步,希望大家阅读完这篇文章之后都有所收获,下面让我们一起去探讨吧!...

-

python下thread模块创建线程的方法

本篇内容介绍了“python下thread模块创建线程的方法”的有关知识,在实际案例的操作过程中,不少人都会遇到这样的困境,接下来...